🔬 Geometry-Supervised 3D Gaussian Splatting Rendering

NJU-3DV Lab (Prof. Yao Yao) enrollment project. This project aims to familiarize with the 3DGS pipeline and master basic research methods and engineering practices in 3D Vision.

📅 Reports & Records

📑 Progress Reports (Notion)

Learning records and progress reports during the project implementation:

- 3.0 Assignment Announcement & Task Description

👉 View Task - 3.1 Progress Report 1.0 (2025.10.17)

👉 View Report - 3.2 Progress Report 2.0 (2025.10.21)

👉 View Report

📝 Project Summary

💡 Code Implementation & Architecture Evolution

This project is deeply customized based on the Pointrix framework. The core objective is to introduce Geometry Supervision and Temporal Consistency (Optical Flow) constraints to improve reconstruction quality under sparse views.

1. Renderer Modifications

Extended the traditional Splatting rasterization pipeline in MsplatNormalRender. In addition to RGB color, real-time rendering capability for Optical Flow has been added:

- Dual-Projection Mechanism: When rendering the current frame t, 3D Gaussians are simultaneously projected to the camera view of the next frame t+1.

- Flow Calculation: The instantaneous motion field of each Gaussian sphere is obtained by calculating the difference between the two projection coordinates Δuv = uvt+1 - uvt.

- Rasterization: This motion field is Alpha Blended as an attribute to obtain a differentiable rendered optical flow map for subsequent supervision.

2. Loss Function Design

To leverage geometric priors, multiple new loss functions were constructed in NormalModel:

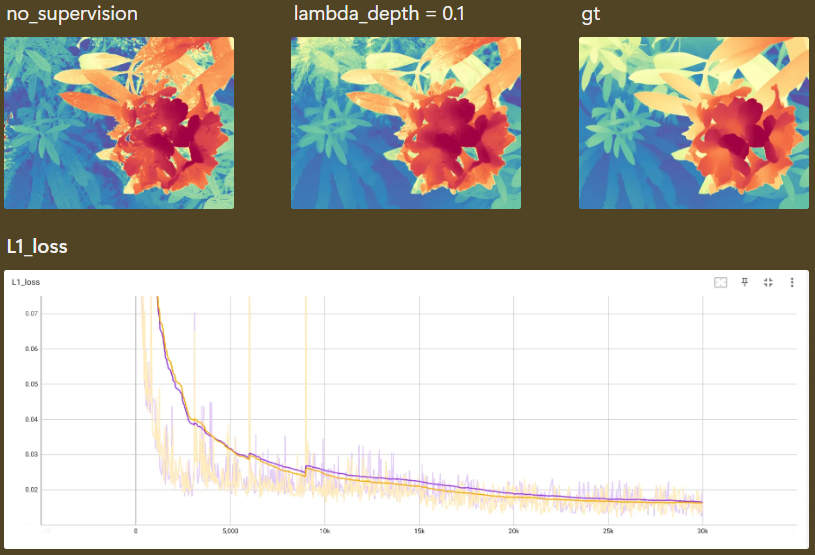

- Depth Loss: Introduced a Scale-Shift Invariant alignment strategy. Since monocular depth estimation (e.g., MoGe) has scale uncertainty, the code aligns rendered depth with prior depth via least squares and introduces Edge-aware Weighting to reduce the impact of alignment errors at object boundaries.

- Flow Loss: Constrains rendered optical flow against GT optical flow using Charbonnier Loss. Robustness handling is specifically added to filter out minute motion noise, and weights are dynamically adjusted based on RGB image gradients to preserve texture edges.

3. Pipeline & Validation

Resolved the temporal discontinuity issue caused by training/validation split. Refactored camera management logic in examples/supervise/model.py, introducing all_camera_model to maintain global Original Frame IDs. This ensures that even in a randomly sampled validation set, the physical "next frame" can still be correctly indexed for optical flow validation.

👀 Detailed Implementation: Check out examples/supervise/model.py and renderer.py in the GitHub repository.